Artificial Intelligence has stoked the fascination of futuristic dreamers since Isaac Asimov’s visionary novels like I, Robot, and the Foundation series.

In March 2016, people said we had taken a step closer to breaching this strange new world.

Millions watched on in disbelief as Google’s AlphaGo robot roundly trounced the Korean Grandmaster of the board game, Lee Se-dol. Go, a traditional Chinese game invented over 2,500 years ago, is an abstract strategy game that is rather hard to master. Its scope and complexity is such that it makes chess look a bit like a game of noughts and crosses scribbled on a napkin; and it is often estimated that the total number of possible Go positions is around 10 to the 80th power, or roughly the number of (subatomic) particles in the universe. Yet people now believe that this figure undersells the true total.

The contest wasn’t even close; in the best of five sets game, AlphaGo raced to an unassailable 3-0 lead, leaving Lee speechless at the ‘perfect’ gameplay. One particular move in set 2 even generated a cult following. The Go cognoscenti speak of ‘Move 37’ in hushed tones – a move so unexpected, so compelling, so beautiful, that it was kind of transcendental. And it was just a clever robot that did that.A world-beater looking worried

Google achieved this through their research into developing ‘deep neural networks’ – the closest thing to an artificial brain anyone has seen. They’re biologically inspired programming paradigms that allow computers to learn from observational data. They’re not just smart, but they progressively hone their skills, based on their previous experiences. A bit like humans, you might say. So we now have computers that learn – slowly at first, and then at an ascending rate. Scary stuff.

Where, then, does this relate to language?

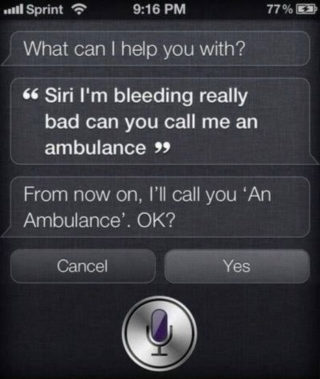

Linguistic Artificial Intelligence is already involved in the daily lives of millions, at some level. Take Cortana, Microsoft’s little helper, or its more famous cousin Siri, which has been responding sagely to the silly questions of iPhone users around the world since 2011.

Except…

Siri still has some way to go.

Meet SyntaxNet. Or, to use its rather excellent pet name, Parsey McParseface.

This is Google’s software for parsing natural language syntax. ‘Parsing’ is a term from linguistics for analysing a string of units and working out the correct hierarchical combination of these units. Just like you’re doing now. Honestly.

Hordes of bespectacled linguists the world over would jump at the chance to explain that Parsey McParseface is learning how to decode dependency relations, which are, more or less, the most fundamental nitty-gritty mechanism for combining the building blocks of human language. Again, in your own way, in reading this, you are proving yourself to be a world expert on the inner workings of dependency relations.

The gory guts of this theoretical stuff must be awfully fascinating to someone, somewhere, but I’ll just put it this way.

Parsey McParseface has the potential to be a lot better at parsing contexts than anything we’ve seen before (barring the human mind itself.)

Its developers claim that as it receives a string of input, it can break the phrases down and tag each component with labels like Noun, Verb, Adjective, Subject & Object with greater accuracy than before.

At this juncture, you may be suffering from the lethargy of the realization that the future is here, and it’s rather less sparkly and levitating than you’d been promised.

Nonetheless, this is still a fairly impressive achievement. Even humans still sometimes have problems performing this tag-assigning task. Just think of headlines like:

“Juvenile Court to Try Shooting Defendant”

Or

“British Left Waffles on Falklands Islands”

Both of which are likely to be headscratchers precisely because we wrongly parse the tags associated with these words – we rely on our general knowledge and human common sense to disentangle which of the two potential structures is the most likely in each case.

Natural language is a very complicated market for any software to break into, because it can get very convoluted. A sentence of 20-30 words can quite feasibly have hundreds and even thousands of potential syntactic structures. As we produce longer sentences, there is a combinatory explosion. This means that a parser like you, me, or indeed Parsey McParseface, must maintain multiple partial hypotheses about the correct form at each stage of the parse – like the chess grandmaster living five moves into the future.

Arguably the most fascinating bit about this story is that Google, having sunk millions into developing the most advanced linguistic AI program, is giving it away for free. As of May 12th 2016, Parsey McParseface is open source, which means that a whole generation of software designers can use its algorithms to make more responsive and accurate language recognition-based apps and programs.

Of course, it is far from perfect, and mechanically perfect language at some point in the future is far from a foregone conclusion. Parsey McParseface is said to do very well with well-formed English sentences, but human language doesn’t always come ‘well-formed’ – that’s just not how we speak. And the intricacies of idiomatic expression are still, unsurprisingly, not accounted for.

Moreover, Parsey McParseface is still limited to English alone. There is every chance that other languages will offer greater challenges than English does.

Nonetheless, the fact of this deep learning technology’s existence at all is fascinating. We will be watching closely to see where Google can go with it.